In order to keep the object at a constant temperature a cooling facility is installed.

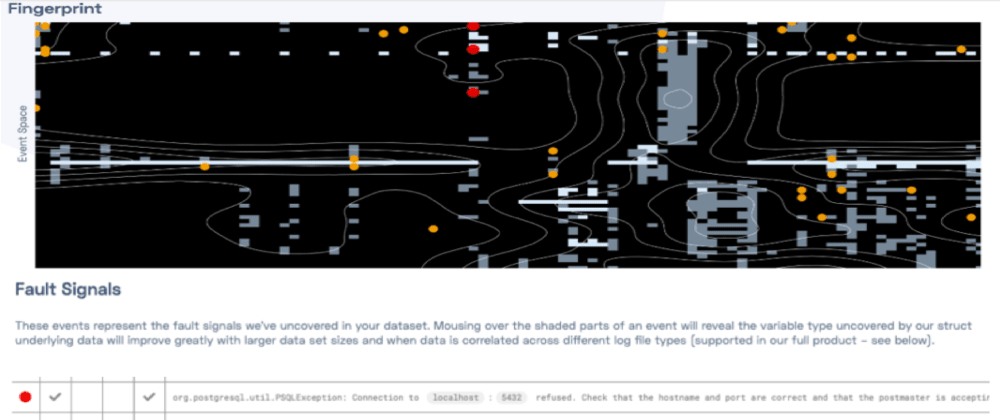

However, there is a dynamically changing heat-flow from a different physical process (e.g. The process models the situation where an object needs to be kept at a constant temperature (e.g. Hence, we can use the difference between the input sequence and the reconstruction sequence – the so called reconstruction error – as a measure for anomaly.įigure 1: High-Level Overview over the transformer architecture Running Exampleįor this paper, we use as a running example a controlled cooling process. As the model will be trained on system runs without error, the model will learn the nominal relationships within a system run (e.g.

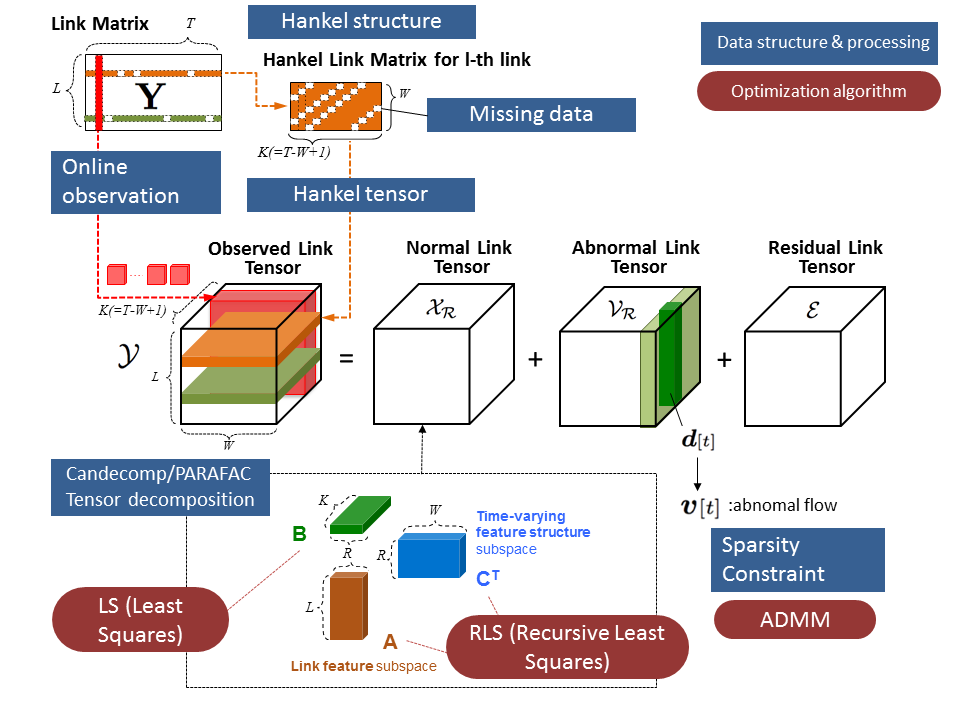

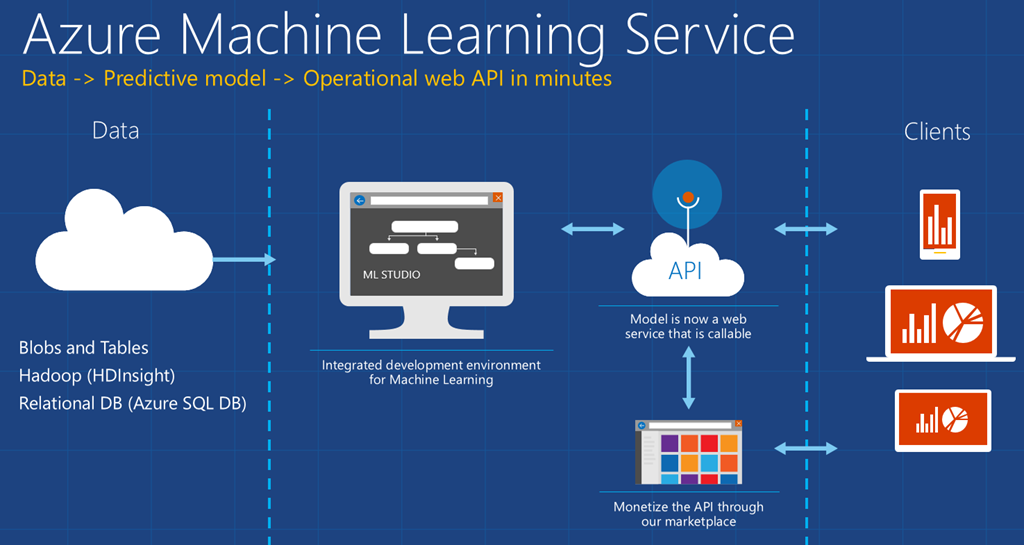

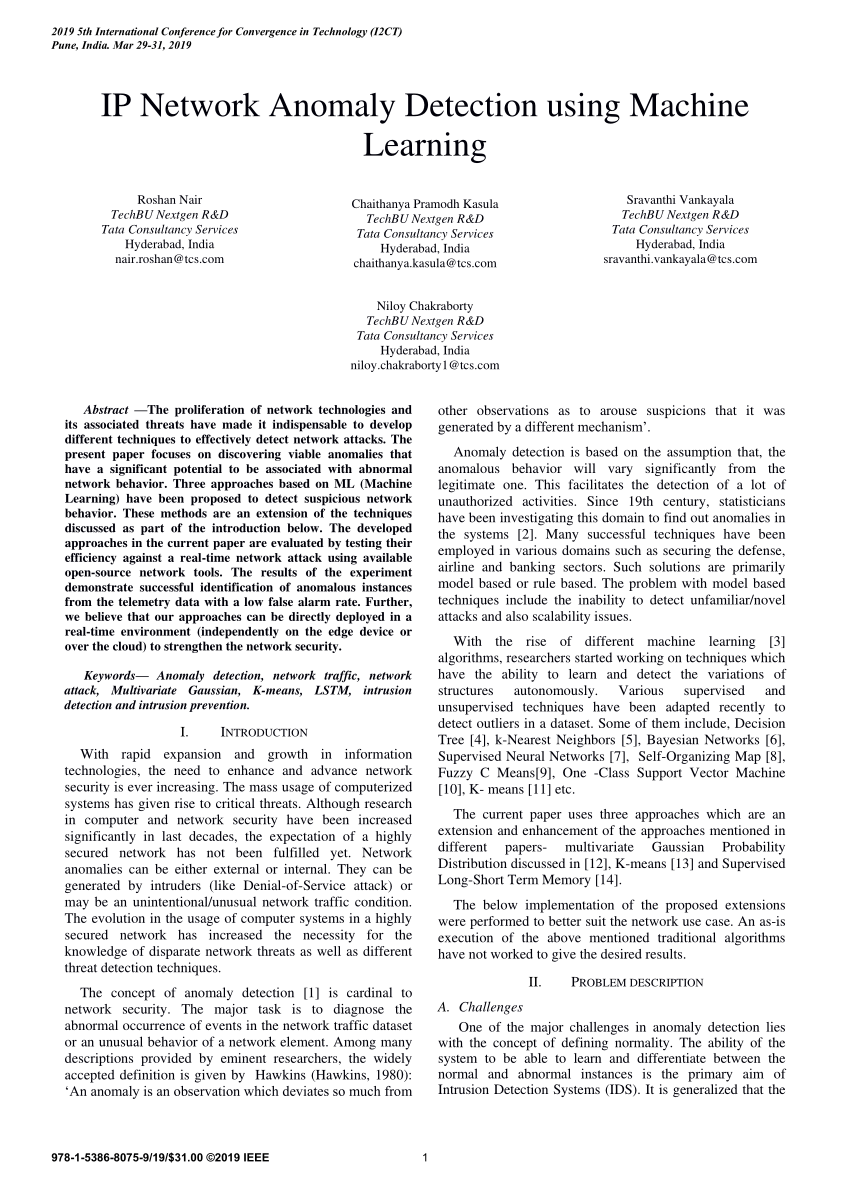

The idea is to train the model to compress a sequence and reconstruct the same sequence from the compressed representation. What makes the transformer architecture different from other encoder-decoder architectures is the fact that it uses no variant of recurrent network (such as long-short-term-memory) and instead captures dependencies between different time instants by a preprocessing technique called positional encoding together with an architecture pattern called attention.įor the task of anomaly detection, we use the transformer architecture in an autoencoder configuration. Then it uses a decoder to construct the output sequence from the compressed representation. The transformer architecture uses an encoder to compress an input sequence into a fixed size vector capturing the characteristics of the sequence. Transformer Deep-Learning Architectureįor the anomaly detection we employ the popular transformer architecture that has been successful especially in the field of natural language processing and excels especially in transforming sequences. In this paper we demonstrate how the transformer architecture can be deployed for anomaly detection. The transformer architecture leverages several concepts (such as encoder/decoder, positional encoding and attention), which enables the respective models to efficiently cope with complex relationships of variables especially with long-ranging temporal dependencies (e.g. A type of architecture which is the base for many current state-of-the-art language models is the transformer architecture. In the last years, major improvements have been made on using deep-learning techniques for NLP, which resulted in models that are, for example, translate text in a way nearly indistinguishable from human translations. This is not much of a surprise as text is also a form of sequential data with many complex interdependencies. These types of models are similar to models that have been used in text-analysis and natural language processing (NLP). Long-Short-Term-Memory or Gated-Recurrent-Unit) are applied for this task. Typically, some form of sequential model (e.g. Recently, deep-learning techniques are applied to the detection of anomalies as well. There are various established techniques to detect anomalies in time-series data, for example, dimension reduction with principal component analysis.

By detecting anomalies automatically, we can identify problems in a system and can take actions before a larger damage is inflicted. For example, because they have been collected from a machine suffering a degradation. Anomalies are parts of such a time-series which are considered as not normal. Often, we are dealing with time-dependent or at least sequential data, originating, for example, from logs of a software or sensor values of a machine or a physical process. Anomaly-Detection with Transformers Machine Learning Architecture Anomaly DetectionĪnomaly Detection refers to the problem of finding anomalies in (usually) large datasets.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed